Overview

Computer programs have come a long way since the 1900s. The algorithms underlying such programs have even started to replace certain types of management decisions in organizations. For example, drivers for Uber and Lyft, and food delivery workers with DoorDash and GrubHub rely almost exclusively on algorithms. But others claim we're still quite a ways off from AI superiority; algorithms need managerial judgement to set the right goals, input reliable data, and understand the more subjective parts of life (Luca, et al., 2016), like ethics and emotions.

Recent research suggests that technical decisions like maintenance or scheduling don't generate significant differences in fairness or trust between algorithms and human managers, though algorithms are viewed favorably when it comes to efficiency and impartiality (Lee, 2018). But for more subjective tasks like hiring or employee performance evaluation, algorithms were perceived as less fair and trustworthy.

COVID-19 has cast a spotlight on the importance of AI vs. manager decision making in the context of public health. For example, the distribution of scarce resources needed to maintain public health, such as protective medical masks, has become a critical issue. So we ran an experiment testing whether people perceive algorithms or managers as more fair, trustworthy, efficient, and effective during the COVID-19 pandemic.

The Experiment

We had 400 people from Amazon MTurk participate in a survey experiment, a randomized controlled trial of vignette scenario involving a process to distribute masks during COVID-19. Participants read the following scenario, with either "computer algorithms" or "managers' judgment" randomly displayed:

Computer programs have come a long way since the 1900s. The algorithms underlying such programs have even started to replace certain types of management decisions in organizations. For example, drivers for Uber and Lyft, and food delivery workers with DoorDash and GrubHub rely almost exclusively on algorithms. But others claim we're still quite a ways off from AI superiority; algorithms need managerial judgement to set the right goals, input reliable data, and understand the more subjective parts of life (Luca, et al., 2016), like ethics and emotions.

Recent research suggests that technical decisions like maintenance or scheduling don't generate significant differences in fairness or trust between algorithms and human managers, though algorithms are viewed favorably when it comes to efficiency and impartiality (Lee, 2018). But for more subjective tasks like hiring or employee performance evaluation, algorithms were perceived as less fair and trustworthy.

COVID-19 has cast a spotlight on the importance of AI vs. manager decision making in the context of public health. For example, the distribution of scarce resources needed to maintain public health, such as protective medical masks, has become a critical issue. So we ran an experiment testing whether people perceive algorithms or managers as more fair, trustworthy, efficient, and effective during the COVID-19 pandemic.

The Experiment

We had 400 people from Amazon MTurk participate in a survey experiment, a randomized controlled trial of vignette scenario involving a process to distribute masks during COVID-19. Participants read the following scenario, with either "computer algorithms" or "managers' judgment" randomly displayed:

Throughout the COVID-19 pandemic there has been a shortage of N-95 masks for healthcare workers and other essential workers.

To decide who gets a mask and who does not, some hospitals have resorted to using computer algorithms [managers' judgment] to allocate the limited number of masks.

To what extent do you think each of the following words describe this process. (1 = Not at all; 7 = Extremely)

* Fair

* Efficient

* Effective

* Trustworthy

To decide who gets a mask and who does not, some hospitals have resorted to using computer algorithms [managers' judgment] to allocate the limited number of masks.

To what extent do you think each of the following words describe this process. (1 = Not at all; 7 = Extremely)

* Fair

* Efficient

* Effective

* Trustworthy

Participants answered the four-item survey question using a 1-7 scale for each item.

Results

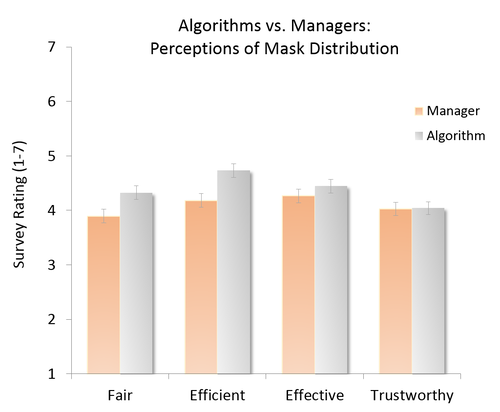

In this battle of man vs. machine, the machine claims two modest victories. Having a computer algorithm decide how to distribute important scarce resources was perceived to be more fair and more efficient than managerial judgment. The algorithm was rated 11.0% more fair (0.42 points; p = 0.017) and 13.2% more efficient (0.55 points; p = 0.002) than managers' judgment. There was no effect on perceived trust or effectiveness (i.e., reducing COVID).

Results

In this battle of man vs. machine, the machine claims two modest victories. Having a computer algorithm decide how to distribute important scarce resources was perceived to be more fair and more efficient than managerial judgment. The algorithm was rated 11.0% more fair (0.42 points; p = 0.017) and 13.2% more efficient (0.55 points; p = 0.002) than managers' judgment. There was no effect on perceived trust or effectiveness (i.e., reducing COVID).

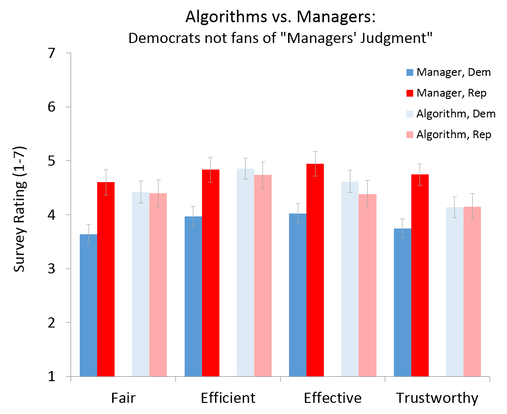

But is this really a story of AI algorithms winning? Or is it managers losing? When we analyze the results by political affiliation, we find an interesting possible story. Republicans' perceptions of fairness and efficiency between algorithms and managers' judgment are not significantly different from each other, nor do they differ much from Democrat's perceptions of algorithms. But Democrats' perceptions of managers' judgment is rated consistently lower. Perhaps some people, in this case our more liberal respondents, trust the fairness of human managers less than computer algorithms.

Conclusion

Contrary to prior research findings, algorithms seem to be perceived as slightly more fair and efficient than human managers' judgment, at least in the context of a public health crisis and the distribution of important resources. But more research should look into this man vs. machine debate in other contexts and for other types of decisions. We may find even more surprising results, particularly across different groups of people.

References

Brustein, J. (2019). The Gnawing Anxiety of Having an Algorithm as a Boss. Bloomberg. June 26, 2019.

Lee, M. K. (2018). Understanding perception of algorithmic decisions: Fairness, trust, and emotion in response to algorithmic management. Big Data & Society. 5(1), 1-16.

Luca, M., Kleinberg, J., & Mullainathan, S. (2016). Algorithms need managers, too. Harvard Business Review, 94(1), 20.

Methods Note

We used ordinary least squares (OLS) regression analyses to test for significant differences in perceived fairness, trust, efficiency, and effectiveness between computer algorithms and managers' judgment. For significant differences, the difference between the two groups' averages would be large and its corresponding “p-value” would be small. If the p-value is less than 0.05, we consider the difference statistically significant, meaning we'd likely find a similar effect if we ran the study again with this population. To test whether differences for specific groups differ significantly from their counterparts (e.g., Republicans vs. Democrats) we used OLS regression analyses with interaction terms.

The survey materials and data for this experiment are available on our page on the Open Science Framework.

Contrary to prior research findings, algorithms seem to be perceived as slightly more fair and efficient than human managers' judgment, at least in the context of a public health crisis and the distribution of important resources. But more research should look into this man vs. machine debate in other contexts and for other types of decisions. We may find even more surprising results, particularly across different groups of people.

References

Brustein, J. (2019). The Gnawing Anxiety of Having an Algorithm as a Boss. Bloomberg. June 26, 2019.

Lee, M. K. (2018). Understanding perception of algorithmic decisions: Fairness, trust, and emotion in response to algorithmic management. Big Data & Society. 5(1), 1-16.

Luca, M., Kleinberg, J., & Mullainathan, S. (2016). Algorithms need managers, too. Harvard Business Review, 94(1), 20.

Methods Note

We used ordinary least squares (OLS) regression analyses to test for significant differences in perceived fairness, trust, efficiency, and effectiveness between computer algorithms and managers' judgment. For significant differences, the difference between the two groups' averages would be large and its corresponding “p-value” would be small. If the p-value is less than 0.05, we consider the difference statistically significant, meaning we'd likely find a similar effect if we ran the study again with this population. To test whether differences for specific groups differ significantly from their counterparts (e.g., Republicans vs. Democrats) we used OLS regression analyses with interaction terms.

The survey materials and data for this experiment are available on our page on the Open Science Framework.

Popular Experiments

COVID MasksDoes wearing a COVID mask affect how others think of you?

|

Video GamesAre video games more enjoyable than board games?

Does age or gender matter? |

Zero-Sum PoliticsDo Democrats or Republicans view society as win-lose?

|